Automate Lead Qualification with Whatsapp AI Chatbots

Most companies treat lead qualification as a sales problem. More training. Better scripts. Tighter CRM hygiene.

But the real issue happens earlier, before a rep ever picks up the phone.

It happens at the first message. The first inquiry. The moment a potential customer reaches out and nobody is there to respond or worse, the wrong person responds too late.

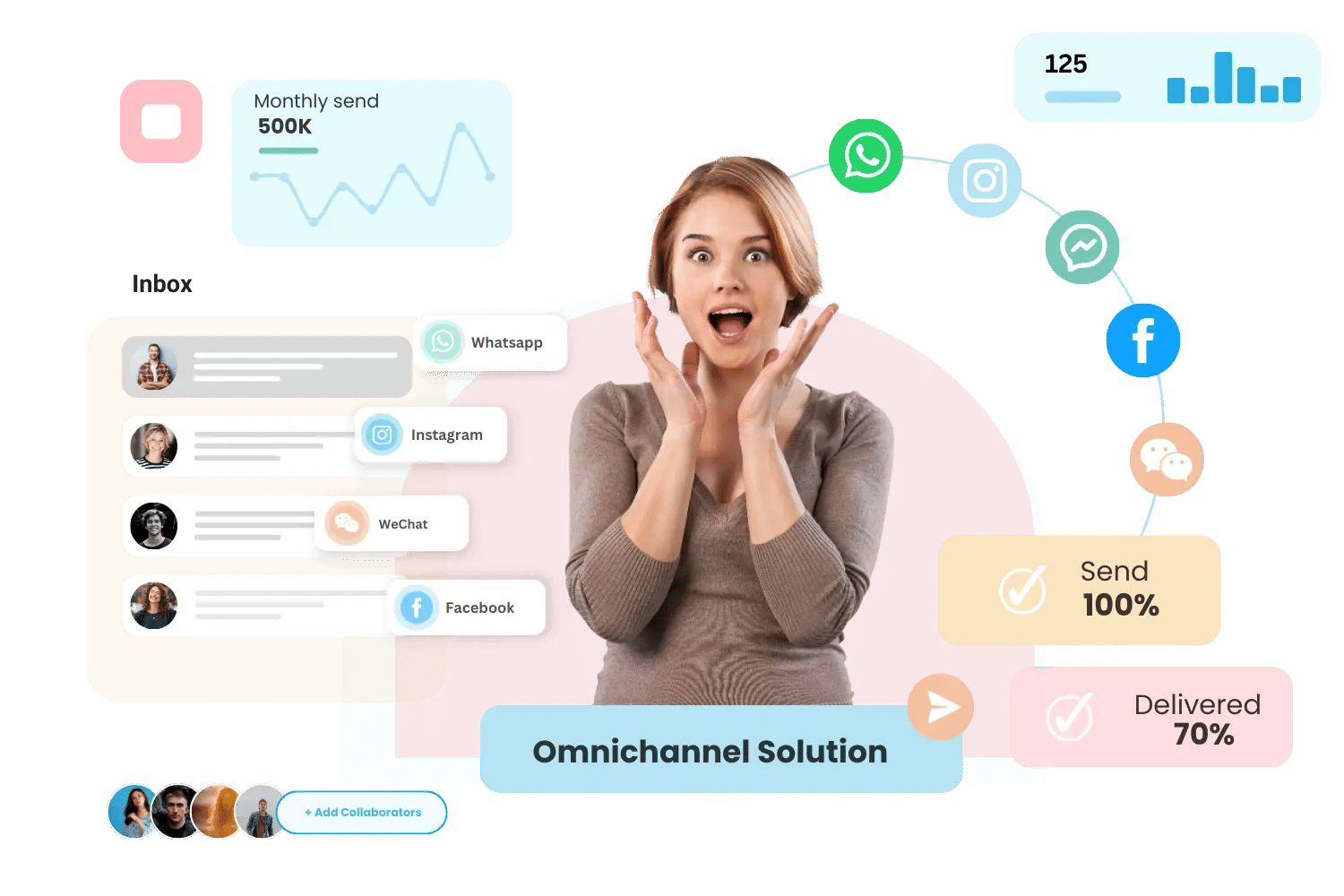

WhatsApp AI chatbots are changing how modern businesses screen, score, and route leads automatically, at scale, without requiring a single human touchpoint to get it started.

And for brands already investing in WhatsApp marketing to drive inbound interest, the qualification layer is where that investment either pays off or quietly bleeds out.

Why Sales Teams Waste Time on the Wrong Leads

Here's a scenario most sales managers recognize immediately.

Your team closes out a busy Monday. Three demos, two proposals, six follow-up calls. Productivity on paper looks great.

But by Friday, only one of those three demos converts into a pipeline opportunity. The other two? One didn't have the budget. One was still six months from a decision.

The time spent? Gone.

This is the lead screening problem and it isn't solved by better salespeople. It's solved by better systems upstream.

Research consistently shows that SDRs spend a significant portion of their working hours chasing leads that don't fit minimum qualification criteria. In B2B and ecommerce contexts alike, poor lead routing and manual qualification processes erode revenue potential before the sales conversation even begins.

What AI Chatbot Qualification Looks Like

An AI-powered lead qualification chatbot doesn't replace your sales team. It saves their time.

Here's how a WhatsApp sales chatbot built through the WhatsApp API handles the qualification layer:

Step 1: Trigger the conversation

When a prospect fills out a form, clicks an ad, or sends an inquiry via WhatsApp, the chatbot initiates a pre-defined conversation automatically. For brands running click to WhatsApp ads, this is where that traffic lands and where it either gets qualified or otherwise.

Step 2: Ask qualification questions

The bot runs through a defined set of chat qualification questions:

What are you trying to solve?

What's your timeline?

How large is your team?

What's your current tool?

These aren't cold survey questions. They're conversational, contextual, and designed to feel like a natural dialogue instead of an interrogation.

Step 3: Apply lead scoring logic

Based on answers, the bot assigns a lead score.

High-budget, urgent, decision-maker? High-priority route.

Exploring, unclear timeline, junior stakeholder? Nurture sequence.

Step 4: Route to the right agent

High-intent leads get connected to a senior rep immediately. Lower-scoring leads receive automated follow-up flow reminders, educational content, or a curated WhatsApp catalog showcasing relevant products until they're ready to buy.

This is auto qualification done right: no delays, no misroutes, no wasted demo slots.

The Business Impact of Automated Lead Screening

The shift from manual to automated lead qualification changes the math of sales entirely.

Faster response time WhatsApp chatbots engage leads within seconds of inquiry not hours. In a world where response time directly correlates with conversion probability, this alone is a significant advantage. Studies suggest that leads contacted within five minutes are far more likely to convert than those contacted after 30.

Improved sales filtering Your sales team only sees leads that have already passed the bot's criteria. The result? Higher-quality conversations, shorter sales cycles, and less demo fatigue.

Scalable lead screening Whether you receive 50 leads a day or 5,000, the qualification process doesn't require additional headcount. The bot handles screening at scale consistently, without error, without sick days.

Richer lead data Every conversation generates structured data. Your CRM receives pre-filled fields, lead scoring values, and conversation history so sales reps walk into every call well-informed.

Why WhatsApp Is the Right Channel for Lead Qualification

Email open rates hover around 20%. WhatsApp open rates regularly exceed 90%.

When you're running a qualification sequence, the channel matters. A prospect who ignores your email drip will often respond to a WhatsApp message because it is personal, immediate, and conversational.

This makes WhatsApp marketing automation the natural home for lead qualification workflows. The platform is already where your customers spend most of their time. Meeting them there with a smart, conversational chatbot reduces friction and increases response rates across the board.

For more information kindly visit -

https://anantya.ai/Automate Lead Qualification with Whatsapp AI Chatbots

Most companies treat lead qualification as a sales problem. More training. Better scripts. Tighter CRM hygiene.

But the real issue happens earlier, before a rep ever picks up the phone.

It happens at the first message. The first inquiry. The moment a potential customer reaches out and nobody is there to respond or worse, the wrong person responds too late.

WhatsApp AI chatbots are changing how modern businesses screen, score, and route leads automatically, at scale, without requiring a single human touchpoint to get it started.

And for brands already investing in WhatsApp marketing to drive inbound interest, the qualification layer is where that investment either pays off or quietly bleeds out.

Why Sales Teams Waste Time on the Wrong Leads

Here's a scenario most sales managers recognize immediately.

Your team closes out a busy Monday. Three demos, two proposals, six follow-up calls. Productivity on paper looks great.

But by Friday, only one of those three demos converts into a pipeline opportunity. The other two? One didn't have the budget. One was still six months from a decision.

The time spent? Gone.

This is the lead screening problem and it isn't solved by better salespeople. It's solved by better systems upstream.

Research consistently shows that SDRs spend a significant portion of their working hours chasing leads that don't fit minimum qualification criteria. In B2B and ecommerce contexts alike, poor lead routing and manual qualification processes erode revenue potential before the sales conversation even begins.

What AI Chatbot Qualification Looks Like

An AI-powered lead qualification chatbot doesn't replace your sales team. It saves their time.

Here's how a WhatsApp sales chatbot built through the WhatsApp API handles the qualification layer:

Step 1: Trigger the conversation

When a prospect fills out a form, clicks an ad, or sends an inquiry via WhatsApp, the chatbot initiates a pre-defined conversation automatically. For brands running click to WhatsApp ads, this is where that traffic lands and where it either gets qualified or otherwise.

Step 2: Ask qualification questions

The bot runs through a defined set of chat qualification questions:

What are you trying to solve?

What's your timeline?

How large is your team?

What's your current tool?

These aren't cold survey questions. They're conversational, contextual, and designed to feel like a natural dialogue instead of an interrogation.

Step 3: Apply lead scoring logic

Based on answers, the bot assigns a lead score.

High-budget, urgent, decision-maker? High-priority route.

Exploring, unclear timeline, junior stakeholder? Nurture sequence.

Step 4: Route to the right agent

High-intent leads get connected to a senior rep immediately. Lower-scoring leads receive automated follow-up flow reminders, educational content, or a curated WhatsApp catalog showcasing relevant products until they're ready to buy.

This is auto qualification done right: no delays, no misroutes, no wasted demo slots.

The Business Impact of Automated Lead Screening

The shift from manual to automated lead qualification changes the math of sales entirely.

Faster response time WhatsApp chatbots engage leads within seconds of inquiry not hours. In a world where response time directly correlates with conversion probability, this alone is a significant advantage. Studies suggest that leads contacted within five minutes are far more likely to convert than those contacted after 30.

Improved sales filtering Your sales team only sees leads that have already passed the bot's criteria. The result? Higher-quality conversations, shorter sales cycles, and less demo fatigue.

Scalable lead screening Whether you receive 50 leads a day or 5,000, the qualification process doesn't require additional headcount. The bot handles screening at scale consistently, without error, without sick days.

Richer lead data Every conversation generates structured data. Your CRM receives pre-filled fields, lead scoring values, and conversation history so sales reps walk into every call well-informed.

Why WhatsApp Is the Right Channel for Lead Qualification

Email open rates hover around 20%. WhatsApp open rates regularly exceed 90%.

When you're running a qualification sequence, the channel matters. A prospect who ignores your email drip will often respond to a WhatsApp message because it is personal, immediate, and conversational.

This makes WhatsApp marketing automation the natural home for lead qualification workflows. The platform is already where your customers spend most of their time. Meeting them there with a smart, conversational chatbot reduces friction and increases response rates across the board.

For more information kindly visit - https://anantya.ai/

.jpg)